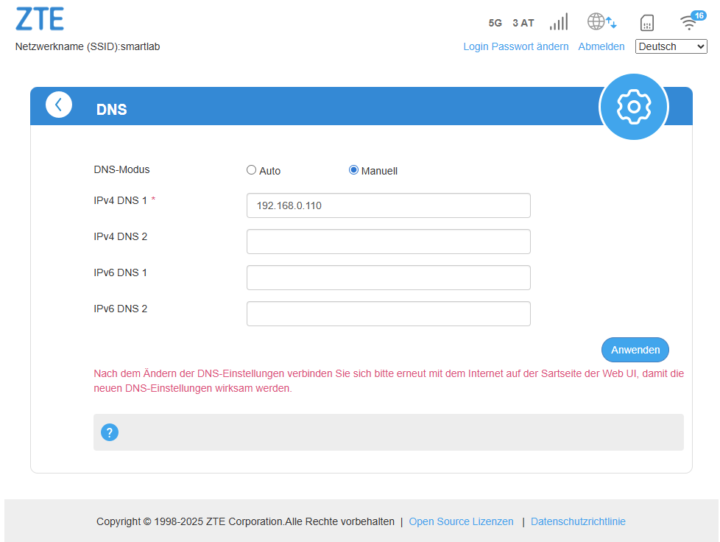

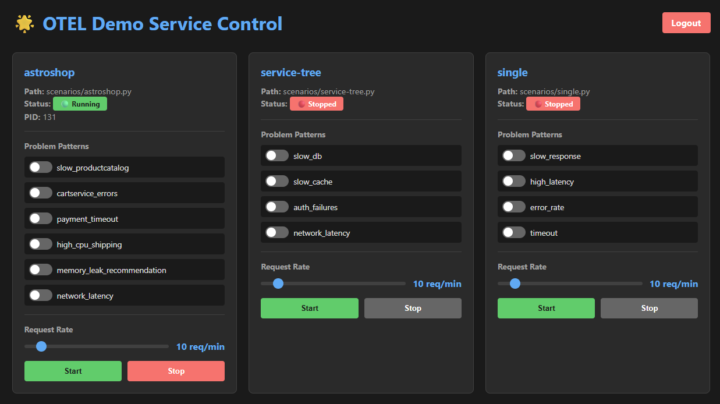

Running OpenClaw on a Synology DiskStation is a convenient way to host the system permanently in your home lab without dedicating a separate server. Synology’s Docker support makes deployment straightforward, but there are a few details that are important to get right, especially regarding networking and persistent storage. This guide explains how to run OpenClaw […]

Run OpenClaw AI Agent on a Synology Disk